Advanced Techniques in Natural Language Processing: A Comprehensive Overview

The AI landscape is evolving at an unprecedented pace. From OpenAI’s GPT-4 Turbo to Google’s Gemini Ultra and Anthropic’s Claude, large language models are reshaping how we work, create, and solve problems. This comprehensive analysis explores the latest breakthroughs, technological advances, and what they mean for the future of artificial intelligence.

What’s the Latest Breakthrough?

Cost and speed are game-changers. Quantization techniques reduce model sizes without losing accuracy – a 70B parameter model now fits on a single GPU. Speculative decoding accelerates inference by 3-4x. Token economy improvements (cheaper input tokens) make AI affordable for enterprises. AMD’s MI300X competing with NVIDIA’s H100 brings competition and price pressure.

Understanding the Technology

Large Language Models (LLMs) are neural networks trained on massive text datasets to predict and generate human-like text. They use the Transformer architecture (attention mechanisms) to understand relationships between words. Key metrics:

• Parameters: GPT-4 has 1.76 trillion parameters; larger doesn’t always mean better

• Training Data: Models trained on petabytes of text from internet, books, research papers

• Context Window: How much text the model can process at once (now up to 1M tokens)

• Latency: Response time – critical for real-time applications

• Cost: Input/output tokens priced in $/1M tokens

Recent advances include Retrieval-Augmented Generation (RAG), allowing models to fetch current information instead of relying on training data cutoffs.

Which Companies Are Leading?

Anthropic (Claude Series):

• Claude 3 Family: Opus (most capable), Sonnet (balanced), Haiku (fast)

• Constitutional AI: Built-in safety and alignment from foundation

• 200K Context: Can analyze entire books, codebases, project docs

• Focus: Reliability, accuracy, reducing hallucinations

Meta (Llama Series):

• Llama 2/3: Open-source, competitive with closed models

• Enterprise Applications: On-premise deployment for privacy

• Licensing: Free for commercial use (unlike older models)

Real-World Applications

LLM technology is being deployed across industries:

Software Development: GitHub Copilot and ChatGPT assist 80%+ of developers daily. Companies like Google and Meta use AI to write, test, and deploy code 2-3x faster.

Customer Support: AI chatbots handle 70%+ of inquiries. Companies save millions while improving response times. Complex issues escalate to humans with full context.

Healthcare: AI analyzes medical images (radiology) with 95% accuracy, competitive with human radiologists. Drug discovery accelerated from 5 years to 2 months.

Finance: Risk analysis, fraud detection, portfolio optimization. AI models identify patterns humans miss. JPMorgan’s COIN (Contract Intelligence) reviews 360,000 commercial loan agreements annually.

Content Creation: Marketing, copywriting, video scripts. Companies reduce production time by 75%. Netflix uses AI to optimize subtitles and thumbnails.

Enterprise Search & Analytics: Companies search internal documents (emails, chats, files) with AI understanding context. Salesforce Einstein analyzes customer data to predict churn and recommend upsells.

Key Features and Capabilities

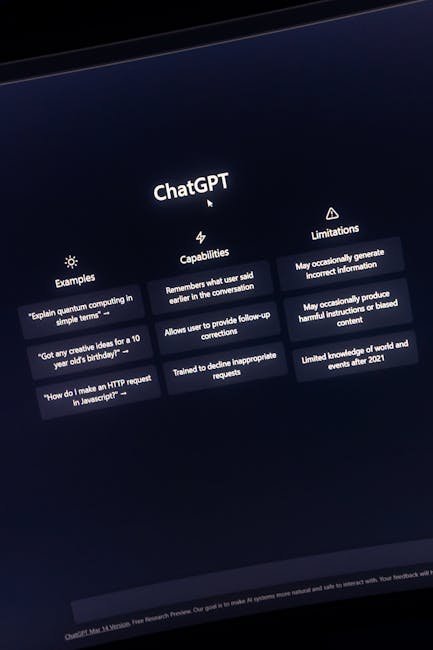

Key Capabilities of Modern LLMs:

✅ Understanding Context: Remembers conversation history, understands nuance, sarcasm, multiple languages

✅ Code Generation: Writes, debugs, optimizes code in Python, JavaScript, Rust, Solidity, etc.

✅ Reasoning: Solves math problems, logic puzzles, complex reasoning step-by-step

✅ Creative Writing: Stories, poetry, marketing copy, fiction adapted to style

✅ Analysis: Summarizes documents, extracts key information, identifies sentiment

✅ Multi-language: Understands and generates in 100+ languages

✅ Vision: Analyzes images, charts, screenshots, identifies objects

✅ Autonomous Actions: Newer models can browse web, write code, execute tasks

Limitations: Hallucinations (making up facts), outdated training data, context window limits, biases in training data, high computational cost

Performance Metrics

How AI Models Are Evaluated (2026 Benchmarks):

MMLU (Massive Multitask Language Understanding): ~50 domains tested

• GPT-4: 86.4%

• Claude 3 Opus: 86.8%

• Gemini 1.5 Pro: 87.9%

• Llama 2 70B: 82.3%

Math (MATH benchmark): Complex mathematical problem solving

• GPT-4: 52.9%

• Claude 3 Opus: 60%+

• Gemini 1.5: 58%+

Code (HumanEval): Writing functionally correct code

• GPT-4: 92%

• Claude 3 Opus: 93%+

• Gemini: 90%+

Speed & Cost:

• GPT-4 Turbo: $10/1M input tokens | $30/1M output tokens

• Claude 3 Opus: $3/1M input | $15/1M output (cheaper for budget)

• Llama Open Source: Free (self-host)

Context Window: Larger = Can process more text at once

• Gemini 1.5: 1M tokens (~750,000 words)

• Claude 3: 200K tokens

• GPT-4: 128K tokens

Comparison: How It Stacks Up

LLM Comparison Table 2026:

OpenAI GPT-4 Turbo: Best for reasoning, code, complex tasks. Expensive. 128K context.

Google Gemini 1.5 Pro: Excellent multimodal. Largest context (1M). Integrated into Google products.

Anthropic Claude 3 Opus: Best for accuracy, safety, domain expertise. Lowest hallucination rate. Balanced performance.

Meta Llama 3: Open-source. Can run locally. Cost-effective. Great for enterprises (no data sharing).

Amazon Bedrock Custom Models: Enterprise integration, compliance, fine-tune on your data securely.

Microsoft Copilot (OpenAI GPT-4): Integrated into Office 365, Windows, GitHub. Accessibility focus.

Specialized Models:

• Code: Cursor AI, GitHub Copilot X outperform general models

• Medical: Med-PaLM specialized for healthcare

• Finance: BloombergGPT optimized for financial markets

Recommendation: Choose based on use case. For general tasks: Claude or GPT-4. For privacy: Llama. For vision: Gemini.

What’s Coming Next?

What’s Coming in AI (2026-2028):

🚀 Reasoning Models Evolution: From pattern matching → genuine reasoning approaching AGI capabilities

🚀 Multimodal Mastery: Single models seamlessly handling text, images, video, audio, 3D understanding

🚀 Real-Time AI Agents: Autonomous systems that perceive, plan, execute, adapt without human intervention

🚀 On-Device AI: Running powerful models on phones/laptops without cloud dependency (privacy + speed)

🚀 Quantum Computing Integration: 2027-2028: Quantum accelerators for AI (1000x speedup for certain tasks)

🚀 Cost Reduction: Models becoming commodity computations. $1 for 1M tokens by 2027

🚀 Specialized AI Stacks: Vertical solutions (legal AI, medical AI, scientific AI) dominating enterprise

🚀 AI Safety & Regulations: EU AI Act enforces transparency, explainability, accountability

🚀 Neuromorphic Hardware: New chip architectures mimic brains, reducing energy consumption by 100x

Bottom Line: AI transitions from fascinating tech to essential infrastructure. Like electricity, every business will depend on it.